Botify vs GWT, Problem Detection Championship Finals

Let’s face it, this scoreboard is purely fictional. Actually, Google Webmaster Tools and Botify are on the same team. And they complement each other very well.

Googe Webmaster Tools alerts and provides pointers. Botify empowers your investigation.

Botify’s Logs Analyzer helps locate issues and evaluate problem severity: it provides complete data where Google Webmaster Tools provides samples.

Let’s look at GWT’s most common automated errors (we’ll leave aside manual sanctions, alert messages about ‚Äòunnatural’ external linking, malware detection on specific pages etc.).

Google Webmaster Tools sends alert messages when crawl error rates rise. These error rates are available in the Crawl / Crawl errors section, which is divided into Site errors and URL errors.

Main URL errors alerts:

1) Increase in not found errors

2) Increase in soft 404 errors

3) Increase in ‚Äòauthorization permission’ errors

4) Increase in not followed pages

Main site errors alerts:

5) Google can’t access your site

6) Possible outages

Important alerts unrelated to crawl errors:

7) Googlebot found an extremely high number of URLs on your site

8) Big traffic change for top URL

1) Increase in ‚Äònot found’ errors

What Google says:

‘Google detected a significant increase in the number of URLs that return a 404 (Page Not Found) error.’

Google Webmaster Tools provides:

- A sample list with priorities according to Google, and the date the error was detected.

How the Botify Logs Analyzer can help:

- Check which categories these 404 pages belong to: a type of page that should be crawled? One that shouldn’t? A non-identified type of url format (possibly a malformed url)?

- Check if (or which part of) these 404s are new: a graph specifically shows crawl on urls that were never crawled by Googlebot before.

- Check when this surge in 404s started : perhaps it started slowly some time before the alert.

- Locate pages where these 404s are linked from (with the website crawler that comes with the Botify Logs Analyzer)

2) Increase in ‚Äòsoft 404′ errors

What Google says:

‘Google detected a significant increase in URLs we think should return a 404 (Page Not Found) error but do not.’

Google Webmaster Tools provides:

- A sample list with priorities according to Google and the date each error was detected

How the Botify Logs Analyzer can help:

- Check Googlebot crawl status codes for categories the urls in Google’s sample list belong to, to see if the problem occurs with all pages from given templates or only some.

3) Increase in ‚Äòauthorization permission’ errors

What Google says:

‘Google detected a significant increase in the number of URLs we were blocked from crawling due to authorization permission errors.’

In other words, Googlebot is getting a “Forbidden” status code (http 403) when requesting some urls.

Either these pages should be crawled by Googlebot (and return http 200 – OK), or they should not, in which case Googlebot should not waste crawl ressources trying to access these pages: they should be disallowed in the robots.txt file.

Google Webmaster Tools provides:

- A sample list with priorities according to Google and the date each error was detected

How the Botify Logs Analyzer can help:

- Check http acodes for corresponding categories (determined from the urls in Google’s sample list), to see if the problem occurs with all pages from given templates or only some.

- Check overall forbidden crawl volume and ratio to assess problem severity.

4) Increase in not followed pages

What Google says:

‘Google detected a significant increase in the number of URLs that we were unable to completely follow.’

Examples of redirects which won’t be completed:

- Redirects to pages not found

- Chains of redirects (Google will only follow a limited number of redirects forming a chain)

- Loops of redirects (page A redirects to page B, which in turn redirects to page A)

What GWT provides:

- A sample list with priorities according to Google and the date each error was detected

How the Botify Logs Analyzer can help:

- Check redirects volume, by type of redirected page

- Check redirect targets,through a website crawl (using the crawler that comes with the Logs Analyzer)

5) Google can’t access your site

What Google says:

A variety of messages warning about site-level issues that result in a peak of DNS problems, Server connectivity problems, or problems getting the site’s robots.txt file.

For example:

‘Over the last 24 hours, Googlebot encountered 89 errors while attempting to connect to your site. Your site’s overall connection failure rate is 3.5%.’

Beware of robots.txt fetch errors: Google will stop crawling!

For example:

*‚ÄòOver the last 24 hours, Googlebot encountered 531 errors while attempting to access your robots.txt. To ensure that we didn’t crawl any pages listed in that file, we postponed our crawl. Your site’s overall robots.txt error rate is 100.0%’ *

Google will announce that its crawl is postponed even if the error rate is not 100%. In most examples we’ve seen, error rates were above 40%, but we’ve also seen the same message with a robots.txt fetch error rate below 10%.

What GWT provides:

- The type or error (DNS, server connectivity, access to the robots.txt file)

- A number of failed attempts and failure rate over the last 24 hours

- The ‚Äòfetch as Google’ tool to try to request the robots.txt file as Googlebot would.

Of course the one to turn to regarding DNS and connectivity issues is your service provider.

How the Botify Logs Analyzer can help:

- Check Googlebot’s crawl on the robots.txt file (Tip: create a category just for the robots.txt url to be able to be able to keep a close eye on it).

You will be able to see http status codes, not only for the last 24 hours, but for the full history: perhaps there were earlier minor episodes of robots.txt unavailability, ones that did not trigger any Google alert?

It could be error http status codes such as http 5XX (server error), or no http response – which will result in the logs as a lower daily crawl volume on the robots.txt file.

Mapping those dates to the website’s updates deployment schedule might help narrow down possible causes. - In the case of a connection failures: see if there was a high activity from bots and users, causing heavy server load that may be instrumental in a high connection failure rate.

6) Possible outages

What Google says:

‚ÄòWhile crawling your site, we have noticed an increase in the number of transient soft 404 errors’

This is quite similar to connection failures.

What GWT provides:

- The date and time around which Googlebot experienced the increase in transient soft 404 errors leading to believe there were ‚Äòpossible outages’.

How the Botify Logs Analyzer can help:

- Check Googlebot crawl volume and status codes on the day of the transient soft 404 errors happened.

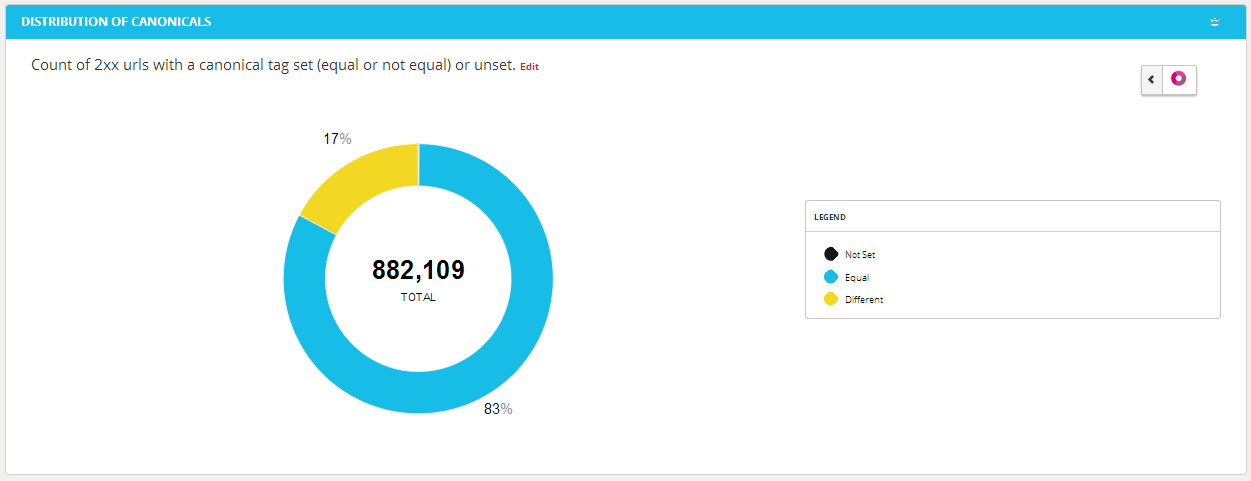

7) Googlebot found an extremely high number of URLs on your site

What Google says:

‚ÄòGooglebot encountered problems while crawling your site [site name].Googlebot encountered extremely large numbers of links on your site. This may indicate a problem with your site’s URL structure. […]’

The message goes on to explain, in essence, that that does not look right: these urls must include duplicates, or pages that were not intended to be crawled by search engines.

GWT provides :

- A list of sample urls that may cause the problem. The list may not cover all problems, cautions Google.

How the Botify Logs Analyzer can help:

- Check if there was any recent change in Googlebot’s crawl activity

- Check which page categories consume a large amount of crawl, which don’t generate organic visits

- Check if a significant amount of crawl is due to “warning urls” (urls that should not be crawled, and are identified as such in the logs analyzer)

- Check if there is a large amount of new pages crawled; if so, when this new crawl started, and if the start was abrupt

- Check the website structure with the crawler that comes with the Logs Analyzer: very high volumes are often associated to increased depth (see top reasons for website depth) and duplicates, two problems which often overlap.

8) Big traffic change for top URL

What Google says:

‘search results clicks for [this url] have increased/decreased significantly.’

Google Webmaster Tools provides:

- The url with increased/decreased traffic.

*How the Botify Logs Analyzer can help: *

If this was caused by algorithm changes on Google’s part, chances are there are other significant trends on other pages.

- Check the list of pages that generate most organic traffic over a given period: export this data before and after the period where the big traffic change happened. Do this by category as there could be different trends depending on the type of page.

In the case of a significant traffic decrease, the page’s content should also be checked, as well as the website’s internal linking (which can be done with the crawler that comes with the Botify Logs Analyzer).