Crawl Budget Optimization for Classified Websites

Classified websites pose unique problems for SEOs — they often have millions of pages, multiple ways to sort and filter to find what you’re looking for, and a constantly changing inventory. At Botify, we often find that these qualities create the need for crawl budget optimization.

In order to understand how a classified website can benefit from crawl budget optimization, we need to understand what crawl budget is, when and why it’s an issue, and how one of our classified website customers was able to use crawl budget optimization to increase their crawl rate, and consequently, their organic search traffic.

What is crawl budget optimization?

Many people think that Google crawls the entire web. Although their goal is to organize the world’s information and make it universally accessible and useful, they simply don’t have the resources to get to everything. This has led to Google ignoring about half of the content on enterprise websites, based on our data. If you run a classified website with 10 million URLs, that means there’s a possibility Google only knows about 5 million of those pages.

If Google doesn’t crawl it, the page won’t be indexed. If the page isn’t indexed, searchers can’t discover it. If searchers can’t discover it, it can’t earn any organic search traffic, and more importantly, revenue.

Why is this happening?

There’s more content than ever before

One reason Google can’t get to all the content on the web is that there’s more content than ever before. There are trillions of web pages, and millions more being published every day. In fact, content creation is now so accessible that 90% of the world’s content has been produced in the last two years.

Google is struggling to keep pace.

We’re not sending clear enough signals to Google

On top of the sheer volume of content is the advent of the modern web. With expanding technology like JavaScript and accelerated mobile pages (AMP), it’s more important than ever to ensure that we’re setting up our websites in a way that makes it easy for Google to find and understand. According to Google’s John Mueller, “The cleaner you can make your signals, the more likely we’ll use them.”

So what’s crawl budget, and how does that factor into all this?

Because Google has limited resources and is unable to get to everything, they have a budget that limits how much time they will spend on your website before leaving. The budget your site is allotted will depend on two primary factors:

- Crawl rate limit: Technical limitations based on your server response or search console settings

- Crawl demand: The popularity of your URLs on the web

Because Google won’t look at all your content, you need to optimize your website to make sure you’re directing Google’s attention toward your most important pages and away from your unimportant pages (pages you don’t want searchers to find in the index).

That’s right — according to our data, not only is Google ignoring half of enterprise website content. They’re also spending time on non-indexable pages. These issues are leading to enterprise websites that only get traffic from about a third of their total pages.

What impacts Google’s crawl of the web?

Botify studied an enormous data set to see what appears to impact what Google does and does not crawl as it explores websites. We found five major qualities that seem to impact Google’s crawl budget the most:

1. The larger the website, the bigger the crawl budget issues

Botify’s data indicates that larger websites have bigger crawl budget issues. Enterprise websites (those with over one million pages) experience a 33% drop in crawl ratio when compared to smaller websites.

2. Non-indexable pages negatively impact crawl

In Botify, a URL is “indexable” if it:

- Responds with an HTTP 200 (OK) status code

- Is not the alternate version of a page (ex: canonicals to another URL)

- Has an HTML content type

- Does not include any no-index meta tag

We found that, when 15% or more of a website’s pages are non-indexable, only 33% of its pages are crawled every month. When less than 5% of a website’s pages are non-indexable, that crawl rate goes up to 50%.

3. The amount of content per page can impact crawl

We found that, on average, pages with more content are crawled more frequently than pages with less content. Our results indicate that pages with more than 2,500 words are crawled twice as much as pages with less than 250 words.

4. Pages that take longer to load negatively impact crawl

Pages with less than 500 millisecond load times typically have a ~50% increase in crawl than pages that are between 500-1000 milliseconds in load time, and a ~130% increase in crawl compared to pages that take more than 1,000 milliseconds to load.

5. Pages not linked in the site structure waste crawl budget

Pages that are not linked in the site structure (what Botify refers to as “orphan pages“) consume 26% of Google’s crawl budget. The graph below represents the average across our largest customers.

You may be wasting Google’s time by letting it crawl old content that you’ve removed from your website, possibly because it’s still located in your sitemap. It’s a good idea to check your log files to see if Google is visiting any orphaned pages on your website, or check your analytics to see if unlinked content is generating any traffic (you may uncover an opportunity to add a valuable page back into your site structure so it can generate even more organic search traffic for your website!).

Crawl budget considerations for classified websites

Classified websites present a unique challenge when it comes to crawl budget optimization. To illustrate, let’s take a look at a success story in which one classified website took back control of their crawl budget, and as a result, saw big SEO wins.

The website: an auto marketplace with millions of URLs

Based in the U.S., this online vehicle marketplace helps people find the right car or truck that fits their exact search criteria, down to specific amenities, in their own neighborhood. A nationwide, yet local, service, the site uses industry-leading data aggregated through years in the auto industry to build patented technology for listing discovery.

The problem: Google was missing 99% of their pages

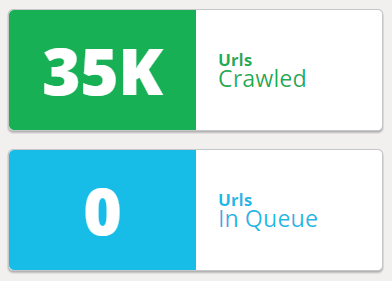

The website had over ~10 million pages at the time we started working with them, and 99% of the website hadn’t been crawled by Google — that’s 9.9 million out of 10 million pages that are unlikely to ever receive any organic traffic from Google searches.

The culprit: Site structure, internal linking, and non-indexable URLs

When we began analyzing this classified website to diagnose why Google was only crawling 1% of its pages, we found a few noticeable issues.

1. Only 2% of crawled URLs were in the site structure

The rest of the 2.66 million URLs Google had crawled were wasting the entirety of Google’s Crawl Budget on pages not attached to the site and not capable of driving organic traffic to the rest of the website.

2. Internal linking was not optimized

The first analysis also highlighted the lack of internal linking, resulting in extreme page depth and a lack of accessibility to crawlers. With only a few internal links per page (more than 1.3 million indexable pages had only a single internal link) content accessibility to crawlers became an obvious concern.

3. A majority of non-indexable pages were all coming from a single page type

By isolating the various sections of the website into its major segments, we were able to see that the vast majority of non-indexable pages were coming from a single type of page, allowing us to focus our efforts on the main culprit.

The solution: eliminate crawl waste and direct Google to important pages

Because Google was wasting time on pages this classified website didn’t want in the index, they needed to update their robots.txt file. If you’re not familiar, the robots.txt file provides crawl instructions to search engine bots. With a few simple additions, this website was able to tell Googlebot to ignore non-critical, unimportant web pages.

The way this website was structured, it was also creating an infinite amount of URLs because of sorting, filtering, and other refinements (+Bluetooth + CD Player, etc.). Every refinement changed the URL, and Google had access to all of these URL variations, creating tens of millions of unnecessary URLs.

Just six weeks after the website implemented these robots.txt changes, they cut their known URLs by about half.

This helped direct Google away from unimportant pages, but this website still needed to do a better job of directing Google to its most important pages. For this, we recommended that they improve their internal linking structure. This took two main forms:

- Adding more links from the home page to vehicle make and model categories made those pages shallower on the site, increasing their perceived importance.

- Overhauling the breadcrumb structure emphasized the natural path the customer would take through the website (ex: Vehicle Make > Vehicle Model > Vehicle City, etc.)

These internal linking improvements resulted in a 15% increase in the average number of inlinks (internal links).

Another important step this classified website took was optimizing their sitemap. We found a significant amount of expired pages that were created by cars that had been sold. These pages were no longer needed on the website, so they were redirected, but Google still had them in its index. Any time a searcher would find the page in search results, they would click and be redirected to a different page. This typically results in high customer abandonment and also lowers the percentage of indexable pages, which is a significant negative ranking factor.

Updating the sitemap allowed the SEO team to tell Googlebot and other search engine crawlers exactly which pages are the most important, focusing crawl budget where it can have the most impact.

The results: 19x increase in crawl activity to strategic pages

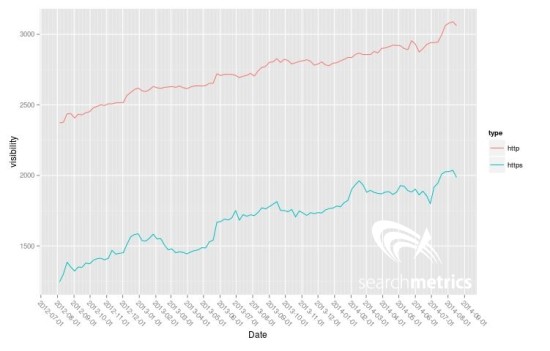

By updating the robots.txt file, improving internal linking, and updating the sitemap, this auto marketplace website experienced a 19x increase in crawl activity to their strategic pages in just six weeks.

Cutting the number of known URLs by half focused crawl budget on valuable content, while increasing internal linking helped search engines discover more of the most important pages.

Increased crawling is great, but we know that’s only the first step in the process of profiting from SEO. How did all of these changes impact organic search traffic?

The correlation between increased crawl of important pages was obvious — the website experienced double the organic search traffic within three months of making these changes.

Improve your crawl, improve your revenue

Crawl budget optimization may seem miles away from your revenue goals, but as you can see, how search engines crawl your website plays a huge rule when it comes to earning organic search traffic to your most lucrative pages.

If Google isn’t crawling your high-value pages, they won’t be indexed. If those pages aren’t in the index, searchers won’t be able to find them. If searchers can’t find them, they won’t get the traffic that can lead to revenue for your business.

Botify unifies all your data, from crawling to conversions, so that you can see how even technical optimizations like robots.txt file, internal linking, and sitemap edits are impacting your organic traffic and revenue. When you have millions of web pages to keep track of, you need a solution to manage all this data at scale.

Whether you manage a classified website or another type of enterprise-level website and you want to see how Botify works, we’d love to walk you through it! Book your demo with Botify and see it in action.