Crawl Speed: How Many Pages/Second? 7 Points To Take Into Account

Crawl Speed: How Many Pages/Second? 7 Points to Take Into Account

You are about to start a crawl on your website. Where should you place the cursor, for the number of pages per second?

The Botify crawler can go faster than 200 p/s. What’s the maximum crawl speed your site will tolerate without degrading performance for users?

Confident users will say ” No problem, we can crawl at 10 pages per second, or even 20! Considering the number of concurrent users our Web server can serve, no worries! “.

Perhaps. It’s not certain, though. Sure, a crawler will request less pages than all concurrent users. We could expect the additional load generated by the crawler to remain negligible, and unnoticeable from a performance perspective. Except that‚Ķ quite often, a website undergoing a fast crawl will see its performance decrease.

Why is that so? Simply because robots and users don’t behave the same way at all. They don’t apply the same stress points to the server. In addition, optimization mechanisms meant for users don’t work well for robots. They can even worsen the situation.

Differences Between Users’ Visits and Robots’ Crawl

- A crawler explores all pages, and never requests the same page twice. Users often request the same pages (as other users) and never visits some (search pages, filter combinations, deep pages…)

- Unlike users, the crawler doesn’t use cookies.

GZIP or Not GZIP?

It depends.

- A crawl without any GZIP option (without “Accept-Encoding: GZIP” HTTP header) on a website which delivers compressed, pre-computed (cached) pages very fast will induce heavy computing on the server or proxy, which will need to decompress all pages.

- On the other hand, a crawl which accepts GZIP and requests pages that are not pre-compressed will also induce CPU-intensive processing, if the server systematically delivers GZIP pages.

The Botify crawler can crawl with or without GZIP. In the Botify Analytics interface, GZIP is enabled by default, and you can disable this option in the advanced settings, crawler tab. For a crawl within Botify Logs Analyzer, just let the team know when you ask for a new crawl.

What can you do?

If you suspect that your web server does not deliver pre-compressed GZIP pages but compresses them on the fly, then it’s best to disable the GZIP option (and if you plan on crawling faster than 10 pages / second, then all the more reasons to do without GZIP).

The wider question of whether using GZIP has a positive impact is far from obvious. We need to consider the available bandwidth vs available CPU and find the right compromise. Here is an excellent article on GZIP, if you wish to explore this.

Session Management which Drags Performance Down

Any website which records user sessions (for instance, using a cookie coupled with database records or sometimes using a memory cache) will slow down when pages are requested by a crawler: a user who visits 25 pages within 10 minutes will only trigger one write operation and 24 read. The crawler will trigger 25 writes, as it does not send any cookie and creates a new session with each page. As write operations are among the slowest for the server, this will impact response time. In addition, these sessions will “fill up” the system – crawling a million pages will create one million sessions.

What can you do?

Pros’ tips: don’t create a session (especially if it is recorded in a database) when the request comes from known robots: do this for the Botify user-agent (or a custom user-agent reserved to internal use), and also, even more importantly, for Googlebot and other search engine robots, which crawl all the time.

Cache Systems Inefficiency, Sometimes Leading to Cache Pollution

Many websites are placed behind a cache system. This type of optimization is very efficient for users, who tend to visit the sames pages: 1 million pages views can easily correspond to no more than 1000 distinct pages. The cache then works wonders, and the site seems very fast.

But if a crawler which requests 1 million pages will request 1 million distinct pages. Very fast, we found ourselves in a situation where requested pages are never in the cache. Web servers are more strained, and the website becomes slower for everybody, users as well as crawler.

In addition, if explored by the crawler are in turn stored in the cache (depending on cache settings), the cache system will store pages that users rarely or never request, while dropping some of the pages that are often requested by users. The website becomes slower for everybody.

What can you do?

Setup the cache system so that it applies specific rules to robots: deliver cached pages if they are available (cache hit), but will never store a page which wasn’t in the cache (cache miss) and was requested to the server. The cache must only store pages requested by users.

Advanced optimization: also apply this type of optimization to lower-level caches. Do this not only for HTML proxy-caches which store web pages, but also for database and hard drive caches.

Crawler Impact on Load Balancing

Many users usually come from many different IP addresses. A load balancing system distributes the load to several front-end servers, based on IP addresses.

A crawler usually sends all its requests from the same IP address (Botify does), or from a very small number of IP addresses.

If we treat robots the same way as users, the front-end server which will receive all the crawler’s requests will be heavily loaded compared to others. Unlucky users who happen to be directed to the same server will experience slower performance.

What can you do?

Check how the load balancer behaves towards robots (no “sticky sessions” for instance).

Alternative: dedicate one front-server to robots, to avoid impacting users in case performance goes down, and contain potential problems.

Computing-Intensive Pages

One of the objectives of a crawler is to find out what’s deep down the website. Deep pages are rarely visited by users, and that’s also where we’ll find, for instance, search pages with heavy pagination, navigation pages with high filter combinations, and low-quality pages generated automatically. Because of their nature, there are a lot of them. They also tend to be computing-intensive, as they required more complex queries than pages found higher in the website (and they are not cached, as they are not visited by users).

What can you do?

Monitor the crawl speed or the website load, and slow down the crawl if performance goes down.

Botify Analytics allows to monitor the crawl and change the crawl speed.

In addition to these factors, other potential problems are more specifically linked to high-frequency crawls:

TCP Connections Persistence

During a fast crawl, the Web server can enter a “degraded” mode for TCP connections management.

Most bots don’t use the HTTP request “keep-alive” option much, or not at all. This option keeps the connection open once they received a page, to avoid opening another one immediately afterwards. Without “keep-alive”, they create a new connection for each page. On the other hand, most Web servers are optimized for re-used connections (keep-alive), and switch to a degraded mode otherwise.

What can you do?

Check that the crawler you are using is able to make ample use of the “keep-alive” option. Botify does.

Potential Bandwidth Issues

Some Web servers are optimized to serve pre-generated static pages. In that case, when crawling very fast, there can be a significant performance drop due to a bottleneck where we don’t expect it: there is not enough available bandwidth at the front of the website. Bandwidth usage is usually not one of the most closely monitored indicators – which tend to be pages per second, errors per second, visits per page, etc.

What can you do?

A little arithmetic to evaluate what crawl rate, added to user’s traffic, can bring us close to the bandwidth limit. And bandwidth monitoring, for the crawl as well as globally, at the Web server level.

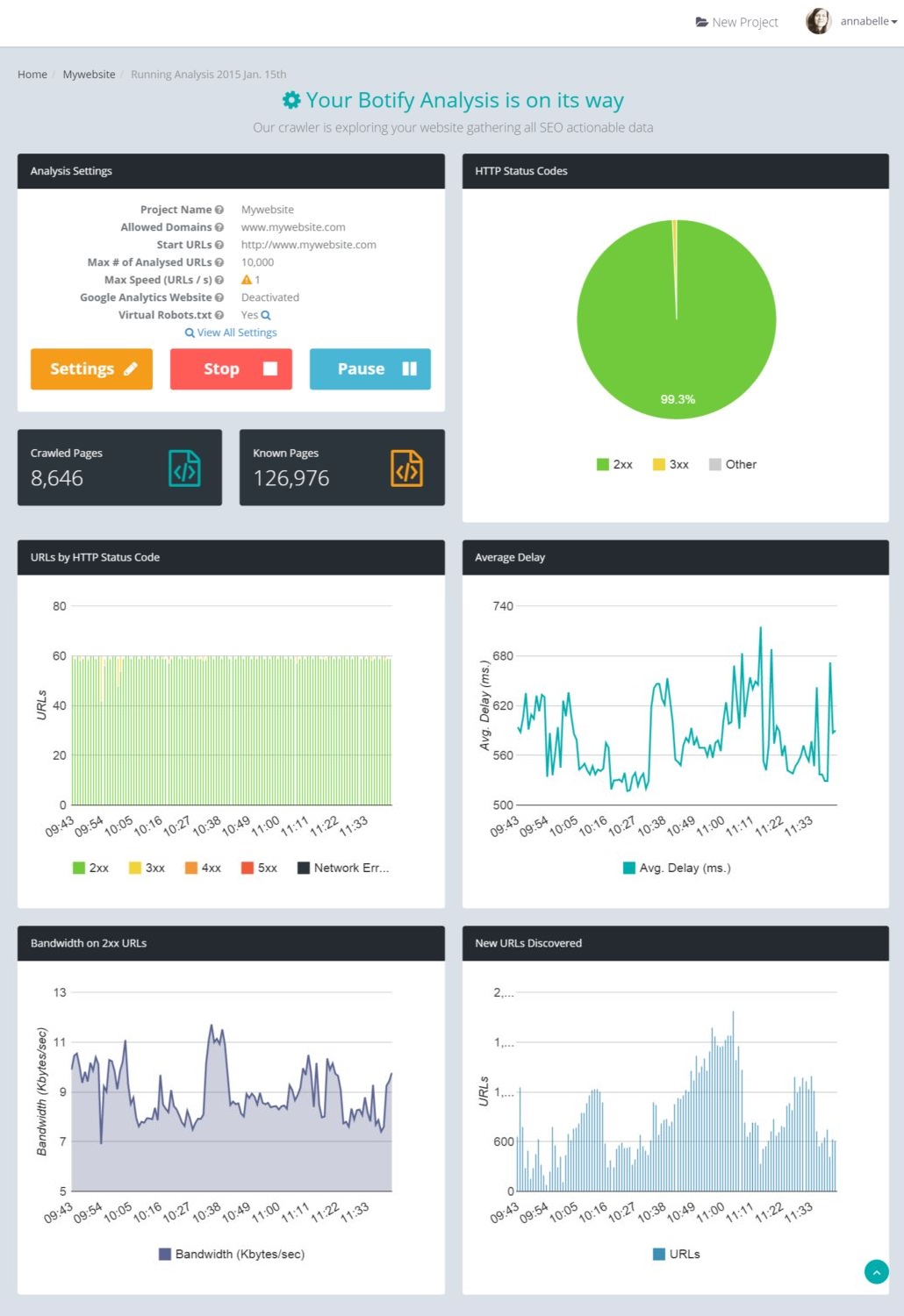

Real-Time Crawl Monitoring with Botify Analytics

With Botify Analytics, you can, in real time, see the number of crawled URLs, the average response time and the bandwidth used by the crawl. The information is displayed for the last 2 hours, minute by minute.

Here is an example:

[the illustration has been updated to show the new Botify interface]

And you can change the crawl speed while crawling. No need to pause the crawl, simply click on “Settings”.

If you notice that the actual crawl speed is lower than what you entered during the crawl setup, it means that the website does not serve pages fast enough. The Botify crawler is cautious: it won’t try to get the N pages per second you entered at any cost, it will always limit the number of simultaneous connections to N, which can become the limiting factor if the website is not fast enough.